Operations | Monitoring | ITSM | DevOps | Cloud

Debugging

How well Latch sleeps at night having Memfault in place

Accelerate development and optimize in-field device quality with remote debugging and monitoring

ShipHero's Observability Journey to Seamless Software Debugging

ShipHero needed a robust, cost efficient observability platform to support DevOps, customer support, and more. Committed to timely service, ShipHero recognizes that the seamless performance of its software is paramount to customer satisfaction. To maintain this high standard, the development team needs the right data at their fingertips to quickly find and solve problems as they occur.

The Dangers of Using VC Funded Companies

TrackJS started ten years ago. To date, the only funding TrackJS ever received was the initial founder investment of $4,500 dollars (a whopping $1,500 per founder). Today, you’d call us a “bootstrapped” business. We’re proud of that fact. It means there’s no outside investors. No one to make us build a product we don’t want to build. And no one that can pull the plug if the growth chart doesn’t look like a hockey stick.

A Practical Guide to Debugging Browser Performance With OpenTelemetry

So you’ve taken a look at the core web vitals for your site and… it’s not looking good. You’re overwhelmed, and you don’t know what change to make because everything seems like too big of a project to make a real difference. There are so many measurements to keep track of and the standards cited seem even scarier. This is extremely normal. Web performance standards can feel impossible to meet for a lot of us.

Introducing CoTerm, your collaborative terminal for pair programming and debugging

For too long, engineers have had to piece together an unwieldy combination of tools to collaboratively debug and resolve incidents while pair programming in real time. These activities normally require developers to work individually through a terminal, but the patchwork solutions that allow teams to work together in terminals all have significant drawbacks.

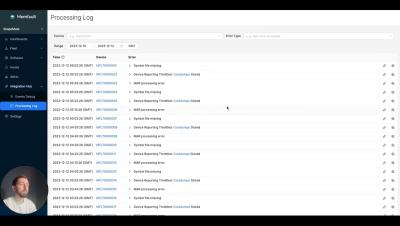

How Embedded Device Observability Helps Latch Build Ultra-Reliable Products

Diving into JTAG - Overview (Part 1)

As the first segment of a three-part series on JTAG, this post will give an overview of JTAG to set up some more in-depth discussions on debugging and JTAG Boundary-Scan. We will dive into the intricacies of the interface, such as the Test Access Port (TAP), key registers, instructions, and JTAG’s finite state machine. Like Interrupt? Subscribe to get our latest posts straight to your inbox.