Generative AI Is Easy to Demo but Hard to Deliver: The MVP Illusion

Image Source: depositphotos.com

This expert article is written by Dmitry Bobolev, founder of Froxy Labs, a London-based startup behind Froxi AI, the UK's first AI-powered mobile app publishing assistant. In under 12 months, Dmitry has scaled Froxy Labs to over $100K in revenue and a team of seven. With a strong technical foundation and hands-on startup experience, he also leads FFDG London, one of the city's largest offline AI coding communities.

Generative AI has become the easiest demo in tech history, but one of the hardest products to operationalise.

Walk into any startup pitch meeting, and you'll witness something remarkable: entrepreneurs can now showcase seemingly revolutionary AI capabilities in minutes. A few prompts to GPT-4, some impressive outputs from Midjourney, or a quick code generation session with GitHub Copilot, and investors are nodding appreciatively. The wow factor is instant, the potential appears limitless, and the competitive advantage seems obvious.

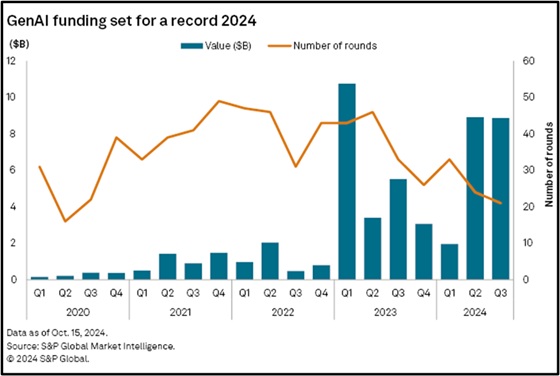

This is the new reality of our post-ChatGPT world. Since OpenAI's breakthrough captured global attention in late 2022, we've witnessed an unprecedented surge in AI-powered startups. Global generative AI funding nearly tripled in 2024 to $56 billion, with generative AI now accounting for 48% of total AI investment, which is a dramatic increase from just 8% in 2022.

Source: S&P Global

But here's what the flashy demos don't reveal: while generative AI may be the easiest technology to demonstrate convincingly, it's proving to be one of the most challenging to deliver as a reliable, scalable business solution. Early demos are seductive precisely because they disguise the deep product and delivery challenges that emerge the moment you try to move from prototype to production.

Why Generative AI Demos Are So Compelling

To understand why we're facing this delivery gap, we first need to recognise what makes generative AI uniquely demo-friendly compared to previous breakthrough technologies.

Consider the struggle that earlier transformative technologies faced in their demonstration phases. Voice recognition systems in the 1990s required extensive training just to recognise simple commands reliably. Virtual reality needed years of hardware refinement before it could deliver experiences that didn't induce nausea. Autonomous vehicles spent over a decade in controlled testing environments before they could navigate real streets safely, and even now require extensive safety drivers and geographic restrictions.

Generative AI turned this pattern on its head. From the moment ChatGPT launched, it could produce coherent, human-like text responses to virtually any prompt.

This immediate accessibility created what I call the "single prompt illusion." Unlike traditional software demos that require elaborate setups and carefully orchestrated user journeys, a generative AI demo can deliver impressive results with just one well-crafted input. Type "Write a business plan for a sustainable fashion startup" into ChatGPT, and you'll get a comprehensive, professional-looking document in seconds. Show that to an investor, and it feels like you're witnessing the future of business automation.

The psychological impact is profound. When humans see AI systems producing outputs that look indistinguishable from expert human work, whether it's code, copy, or creative content, we intuitively assume the underlying capability is robust and production-ready. After all, if it can write like a human, surely it can work like a human, right?

The Seductive MVP Mirage

This brings us to perhaps the most dangerous misconception plaguing the generative AI startup ecosystem: the belief that a compelling demo equals a minimum viable product.

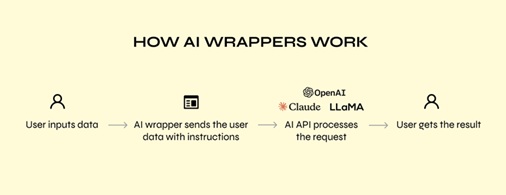

Walk through the portfolios of major VC firms today, and you'll find hundreds of startups that essentially amount to what the industry calls "thin wrappers" around foundation models. These companies take a powerful underlying system like GPT-4, Claude, or Stable Diffusion, add a user interface and some basic prompt engineering, then present this combination as a revolutionary new product.

The pattern is remarkably consistent across verticals. In legal tech, startups demo contract analysis tools that can seemingly review complex agreements in minutes. In marketing, companies showcase AI systems that can generate complete campaign strategies from a brief description. In software development, new platforms promise to convert natural language requirements into fully functional applications.

Source: Scrapbook

These demos are genuinely impressive, and they've convinced investors to deploy capital at unprecedented rates. Late-stage VC deal sizes for GenAI companies skyrocketed from $48 million in 2023 to $327 million in 2024, reflecting the market's enthusiasm for AI-powered solutions.

But here's the fundamental problem: the underlying foundation models that power these demos are not actually "viable" in a traditional business sense. They suffer from systemic issues that make them unsuitable for mission-critical applications without extensive additional engineering: hallucinations, latency issues, limited context and inconsistency.

The Complexity of AI Delivery

The gap between demo and delivery becomes clear the moment these AI-powered startups attempt to scale beyond their initial prototype phase. What looked like a straightforward engineering challenge in the demo environment suddenly reveals itself as a multifaceted complexity nightmare.

Traditional software systems are designed around deterministic logic: given the same input, they produce the same output, every time. This predictability forms the foundation of software testing, quality assurance, and user experience design.

Generative AI systems operate on fundamentally different principles. They are probabilistic by design, using neural networks to generate outputs based on statistical patterns in training data. Around 74% of companies struggle to achieve and scale value from AI initiatives, with reliability issues representing a major barrier.

Perhaps the most sobering reality check comes when startups attempt to scale their AI applications beyond demonstration phase. More than 90% of CIOs said that managing cost limits their ability to get value from AI for their enterprise, according to recent Gartner research.

The inference costs that seem manageable for a few demo queries quickly become prohibitive at production scale. Consider a typical B2B SaaS application serving 1,000 enterprise customers. If each customer generates 100 AI queries per day, and each query costs $0.05 in model inference fees, the monthly API costs alone reach $150,000 before accounting for any infrastructure, engineering, or business costs. For many startups, these economics simply don't work without charging prices that put them out of reach for most potential customers.

Some companies attempt to address this by switching to smaller, cheaper models, only to discover that the impressive capabilities showcased in their demos degrade significantly. Others try to optimise prompts or implement caching strategies, but these approaches often reduce the flexibility and responsiveness that made the original demo compelling.

What Actually Creates Lasting Value

Despite these challenges, some AI-powered companies are successfully navigating the transition from demo to delivery. The common thread among sustainable AI businesses is their recognition that the AI capability itself is just one component of a much more comprehensive value proposition.

The most defensible AI applications combine foundation model capabilities with proprietary datasets and deep domain knowledge. Rather than relying solely on the general knowledge embedded in public training data, these companies curate specialised datasets that enhance model performance for specific use cases.

Consider companies like Harvey in legal tech or Jasper in marketing content. Their value doesn't come from having better AI models, it comes from training and fine-tuning those models on proprietary legal documents or marketing performance data that competitors can't access. This approach transforms a generic AI capability into a specialised tool that produces more relevant, accurate results for domain-specific tasks.

The key insight is that the AI model becomes a means of leveraging proprietary data, not the primary source of value. This shift in perspective leads to different product decisions, go-to-market strategies, and competitive positioning.

The most successful AI products don't ask users to change their existing workflows: they enhance those workflows invisibly. Instead of building standalone AI applications that require users to learn new interfaces and processes, these companies embed AI capabilities directly into the tools and platforms their customers already use.