How Local-First AI Agents Are Reshaping IT Operations Automation

Image Source: depositphotos.com

IT operations teams have spent the last decade embracing automation — from auto-scaling rules and CI/CD pipelines to AIOps platforms that correlate alerts across sprawling infrastructure. Yet a fundamental tension remains unresolved: the most powerful AI automation tools require you to route sensitive operational data through external cloud services you do not control.

For teams managing production environments, this is not an abstract compliance checkbox. It is a concrete risk. Log streams contain customer data. Configuration files expose internal architecture. Alert payloads reveal business-critical thresholds. Sending all of this to a third-party AI provider to get intelligent automation in return forces ops teams into an uncomfortable trade-off between capability and control.

A new category of tooling — local-first AI agents — is eliminating that trade-off entirely.

The Automation Paradox in IT Operations

The promise of AIOps has always been straightforward: let machine learning reduce alert noise, accelerate root cause analysis, and automate routine incident response. And the results can be significant. According to Gartner's 2025 IT Operations report, organizations using AI-driven automation reduced mean time to resolution (MTTR) by 40% on average.

But adoption has been slower than expected. A 2025 Dynatrace survey found that 70% of CIOs expressed concern about sending operational telemetry to external AI platforms, citing data residency requirements, regulatory constraints, and the risk of exposing internal system architecture.

The result is a paradox: teams that need automation the most — those drowning in alert noise and on-call fatigue — are often the least willing to hand their most sensitive data to yet another SaaS vendor.

What Makes Local-First AI Agents Different

Unlike cloud-hosted AIOps platforms, local-first AI agents run entirely on infrastructure the team controls — a Linux server, a Mac workstation, a NAS device, or any machine within the network perimeter. Data never leaves the environment. The agent processes logs, triggers actions, and stores its operational memory locally.

What distinguishes these from simple scripts or cron jobs is persistent autonomy. Modern agent runtimes support:

- Scheduled execution — cron-style recurring tasks like nightly log audits or morning infrastructure health checks

- Event-driven triggers — reacting to webhook events, alert payloads, or message bus signals in real time

- Long-term memory — maintaining context across runs so the agent learns patterns specific to your environment

- Multi-platform integration — pushing notifications and taking actions across Slack, Telegram, GitHub, and internal tools via composable protocols like MCP

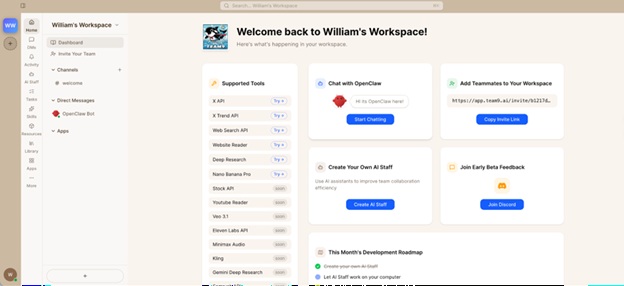

OpenClaw, an open-source agent runtime built on this local-first architecture, demonstrates how far the model has evolved. It provides a production-grade execution environment with transparent Markdown-based memory, permission-scoped tool access, and built-in safety guardrails — all running on hardware the operations team owns. There is no API metering. There is no data leaving the building.

Practical Use Cases for Ops Teams

The applications map directly to daily operational pain points:

Automated On-Call Briefings. An agent that runs at 07:00 each morning, scans overnight alerts, cross-references them against known issues and change logs, and delivers a prioritized summary to the on-call engineer's Slack channel. No more spending the first 30 minutes of a shift manually triaging a backlog.

Intelligent Alert Correlation. Instead of forwarding every threshold breach to PagerDuty, a local agent can correlate alerts within a configurable time window, suppress duplicates, and escalate only when patterns match genuine incident signatures. Teams using this approach report 50-60% reductions in actionable alert volume.

Runbook Execution. When a known failure pattern is detected — disk usage above 90%, certificate expiring within 7 days, health check endpoint returning 503 — the agent executes the corresponding remediation runbook automatically and logs every action taken for audit.

Change Risk Assessment. Before a deployment, an agent can review the diff, check it against historical incident patterns associated with similar changes, and flag potential risks in a pull request comment — all without exposing source code to external services.

Getting Started Without the Infrastructure Overhead

The historical barrier to self-hosted AI tooling has been operational complexity. Standing up model inference, configuring adapters, hardening security, and maintaining the stack over time demanded dedicated engineering effort that many ops teams could not justify.

This is where managed deployment platforms have changed the equation. Team9 AI Workspace eliminates the setup burden by providing plug-and-play agent deployment — no manual Node.js configuration, no adapter wiring, no security hardening checklist. Teams get a production-ready agent environment with sensible defaults in minutes rather than days.

The practical path forward for most ops teams looks like this:

- Start with one use case — morning briefings or alert correlation are the easiest wins

- Run alongside existing tools — local agents complement, not replace, your monitoring stack

- Iterate on memory and rules — agents improve as they accumulate context about your specific environment

- Expand gradually — once the first agent proves value, extend to runbook automation and change assessment

Conclusion

The question facing IT operations teams is no longer whether to adopt AI automation — the efficiency gains are too significant to ignore. The real question is whether you can adopt it without creating a new category of data exposure risk.

Local-first AI agents answer that question definitively. They deliver the persistent, intelligent automation that modern ops demands while keeping every byte of operational data exactly where it belongs: inside your perimeter, under your control.

For teams already stretched thin by alert fatigue and on-call burnout, that is not just a technical improvement. It is operational leverage without compromise.