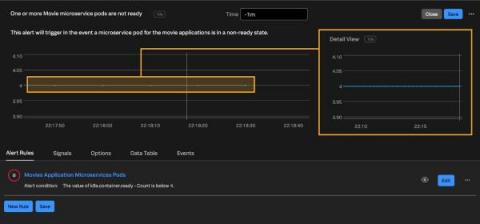

Kubernetes Monitoring: An Introduction

One of the first things you’ll learn when you start managing application performance in Kubernetes is that doing so is, in a word, complicated. No matter how well you’ve mastered performance monitoring for conventional applications, it’s easy to find yourself lost inside a Kubernetes cluster.