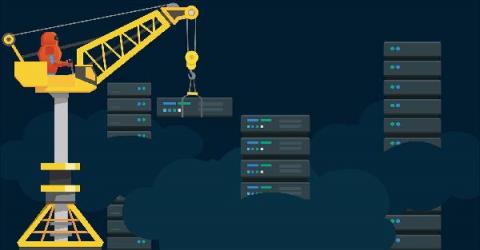

Amazon ECR Unpacked: How It Works And Why It Matters

If you are running containers on AWS, you need a secure place to store and share your images. Amazon ECR offers a managed registry that handles image storage, scanning, permissions, and versioning without extra configurations. In this guide, you’ll learn what Amazon ECR is, how it works, its features, real-world benefits, and pricing. We will also introduce you to a cost intelligence approach to keeping ECR costs under control.