Operations | Monitoring | ITSM | DevOps | Cloud

Latest News

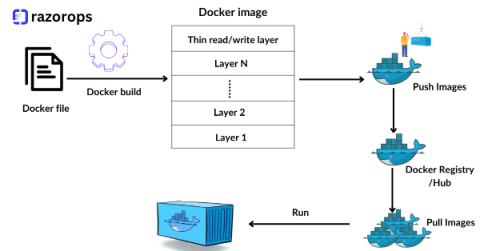

Docker File Best Practices For DevOps Engineer

Containerization has become a cornerstone of modern software development and deployment. Docker, a leading containerization platform, has revolutionized the way applications are built, shipped, and deployed. As a DevOps engineer, mastering Docker and understanding best practices for Dockerfile creation is essential for efficient and scalable containerized workflows. Let’s delve into some crucial best practices to optimize your Dockerfiles.

How To Build Your DevOps Toolchain Effectively

Software development, the adoption of DevOps practices has become imperative for teams aiming to streamline workflows, boost collaboration, and deliver high-quality products efficiently. At the heart of successful DevOps implementation lies a robust toolchain—a set of interconnected tools and technologies designed to automate, monitor, and manage the software development lifecycle.

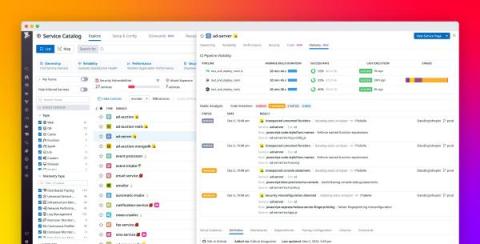

Improve your shift-left observability with the Datadog Service Catalog

Your applications are only as powerful as they are iterable. To keep up with their rapidly changing production environments, your teams need reliable CI/CD systems that implement best practices—including build and test automation, flaky test management, and deployment management. By optimizing their CI/CD pipelines, your teams can build their apps more efficiently, deploy them more safely, and catch bugs and security vulnerabilities before they make it to production.

Kubernetes

Kubernetes (also known as k8s or “kube”) is an open source container orchestration platform that automates many of the manual processes involved in deploying, managing, and scaling containerized applications.

Stop guessing: Master canary deployments with Ocean CD Baseline

How Cloudsmith Helped Protect the Software Supply Chain in 2023

As the "new guy" here at Cloudsmith (I was named CEO in August), I'm learning more every day about how customers use us to protect their software supply chains. We're doing everything we can to give you a single source of truth for every artifact - whether it's an open source package, a Docker container, a Linux image - that enters your software supply chain, and everything that you produce on the other side.

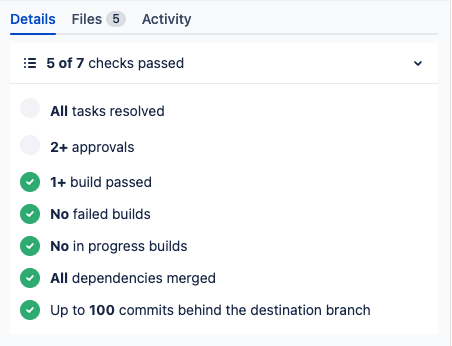

Custom Merge Checks in Bitbucket Cloud

The Benefits of using containerization in DevOps workflows

Software development cycles demand rapid deployment and scalability, traditional infrastructure struggles to keep pace. Enter containerization—a revolutionary technology empowering DevOps teams to streamline their workflows, enhance portability, and drive efficiency in software development and deployment.

Testing a PyTorch machine learning model with pytest and CircleCI

PyTorch is an open-source machine learning (ML) framework that accelerates the path from research prototyping to production deployment. You can work with PyTorch using regular Python without delving into the underlying native C++ code. It contains a full toolkit for building production-worthy ML applications, including layers for deep neural networks, activation functions and optimizers. It also has associated libraries for computer vision and natural language processing.