Operations | Monitoring | ITSM | DevOps | Cloud

Logging

The latest News and Information on Log Management, Log Analytics and related technologies.

Announcing Graylog 2.4.6

Today we are releasing Graylog v2.4.6 to fix a few bugs.

Kubernetes Logging with Fluentd and Logz.io

Kubernetes is developing so rapidly, that it has become challenging to stay up to date with the latest changes (Heapster has been deprecated!). The ecosystem around Kubernetes has exploded with new integrations developed by the community, and the field of logging and monitoring is one such example.

Journey from Common Logs to Structured Events!

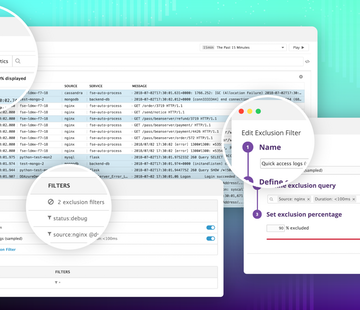

Introducing Logging without Limits

Traditional logging solutions require teams to provision and pay for a daily volume of logs, which quickly becomes cost-prohibitive without some form of server-side or agent-level filtering. But filtering your logs before sending them inevitably leads to gaps in coverage, and often filters out valuable data.

Large-Scale Log Management Deployment with Graylog: A User Perspective

Juraj Kosik, an Infrastructure Security Technical Lead at Deutsche Telekom Pan-Net, has written a detailed case study of how his organization implemented Graylog to centralize log data from multiple data centers exceeding 1 TB/day. His case study provides thorough insights into real-world issues you might run into when implementing and operating a log management platform in a large-scale cloud environment.

Masters of Machines

Securing the Sumo Logic Service

The Importance of Historical Log Data

Centralized log management lets you decide who can access log data without actually having access to the servers. You can also correlate data from different sources, such as the operating system, your applications, and the firewall. Another benefit is that user do not need to log in to hundreds of devices to find out what is happening. You can also use data normalization and enhancement rules to create value for people who might not be familiar with a specific log type.

The Complete Guide to the ELK Stack - 2018

With millions of downloads for its various components since first being introduced, the ELK Stack is the world’s most popular log management platform. In contrast, Splunk — the historical leader in the space — self-reports 15,000 customers total. But what exactly is ELK, and why is the software stack seeing such widespread interest and adoption? Let’s take a deeper dive.