Operations | Monitoring | ITSM | DevOps | Cloud

The latest News and Information on Monitoring for Websites, Applications, APIs, Infrastructure, and other technologies.

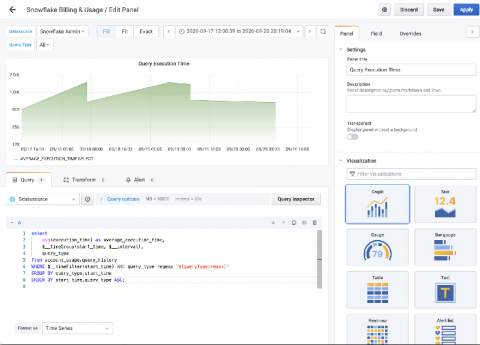

Introducing the Snowflake Enterprise plugin for Grafana

Snowflake offers a cloud-based data storage and analytics service, generally termed “data warehouse-as-a-service.” The main benefit of Snowflake is that you pay for compute and storage that you “actually use,” so it’s not “just another database.” Snowflake has become very popular over the last few years, culminating in a huge IPO just a couple of weeks ago, by allowing enterprise users to affordably store and analyze data using cloud-based hardware and software

Coralogix - On-Demand Webinar: Scaling Observability

Best Practices for Hardware Monitoring

The biggest challenge in this regard is that a lot of the infrastructure comes from different manufacturers. While these manufacturers provide solid solutions for monitoring their hardware, it can be very difficult to oversee the monitoring of all hardware. A hardware monitoring software with an integrated console can monitor all the hardware in the server ecosystem. Hardware monitoring should be an integral part of managing a server and infrastructure.

Monitoring and fashion: we are also trend experts

Not that system monitoring has much to do with NY Fashion Week or the most avant-garde venues in London’s Soho, but they share the yearning for something new, something fruitful and original. And since we are also some kind of modern hipsters obsessed with everything cool and trendy, today we will try to review some of those new trends in monitoring, the most recent news that affect our field.

OpsRamp Integrates with Amazon EventBridge for Better Automation

Enterprises have relied on public cloud providers to shift and transform their legacy workloads using hyperscale infrastructure, but it is vital to ensure that business-critical services are running optimally. Cloud events help developers and operators understand how their workloads hosted on different cloud services are performing at any given time.

Application Performance Monitoring 101

In this guide, let’s dive deep into Application Performance Monitoring (APM) and how it works. We’re going to establish the difference between monitoring and management. Additionally, understand how to leverage APM’s full potential and its role among the different parts of the organizations, not just the technical department. Modern applications bring value to every organization in today’s information age.

Is unreliable software impacting on the happiness of your customers? Interlink's SRE solution might just be the answer!

Site Reliability Engineering (SRE) is playing an increasingly pivotal role in supporting hybrid-cloud, DevOps environments, where Dev teams need to release updates fast and Ops need to avoid errors and failures in production. Powered by integrations to monitoring, orchestration, provisioning and ITSM tools, Interlink’s SRE solution brings improved understanding of where threats to the health of your IT services might lurk within DevOps workflows.

Downsampling with InfluxDB v2.0

Downsampling is the process of aggregating high-resolution time series within windows of time and then storing the lower resolution aggregation to a new bucket. For example, imagine that you have an IoT application that monitors the temperature. Your temperature sensor might collect temperature data. This data is collected at a minute interval. It’s really only useful to you during the day.

10 network tools every IT admin needs

Remember when native commands like ping and ipconfig were adequate for network inspection? With networks becoming more dynamic, these generic tools don’t seem to make the cut anymore. IT admins are in a constant quest for ad hoc network tools and utilities that could aid specific network management needs. Using individual network diagnostic tools, on the other hand, requires constant tab switching, and comparing data pulled by independent network tools to pinpoint your network issue.