Workspaces: Unlock the Power of Multi-tenancy

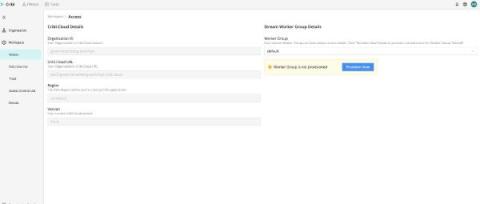

Large enterprises have long had the need to have secure, segregated instances that they can operate independently within their cloud deployments. Whether you need to segregate your data inter departmentally (ITOps vs.