Operations | Monitoring | ITSM | DevOps | Cloud

The latest News and Information on Observabilty for complex systems and related technologies.

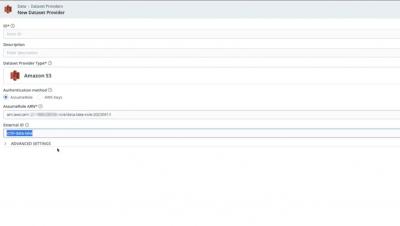

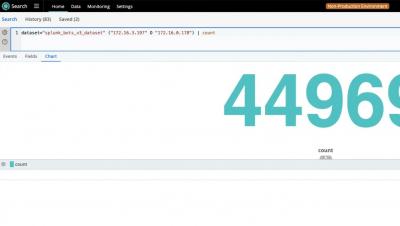

Setting Up a Data Loop using Cribl Search and Stream Part 3: Send Data from Cribl Search to Stream

Setting Up a Data Loop using Cribl Search and Stream Part 4: Putting it All Together

Observability: Working with Metrics, Logs and Traces

The concept of observability centers around collecting data from all parts of the system to provide a unified view of the software at large. Fault tolerance, no single point of failure and redundancy are prominent design principles in modern software systems. But that doesn’t mean errors, degradation, bugs or even the occasional catastrophe don’t happen.

Customer-Centric Observability: Experiences, Not Just Metrics

Martin and Jess recently conversed with Todd Gardner of RequestMetrics as part of the O11ycast podcast. We don’t normally write blogs based on these conversations, but there were impactful comments in that episode that bear repeating. You can listen to the full conversation if you wish. Let’s get into it!

Modernize Your SIEM Architecture

A Step-by-Step Guide to Standardizing Telemetry with the BindPlane Observability Pipeline

Rollouts in BindPlane OP

Performance Ratings and Experience Scores for Meaningful Alerting and Rapid Observability

Administrators and IT management are increasingly leveraging simple quantifiable KPI indicators such as “Performance Ratings” to gain rapid overviews and track key outcomes. Modern IT architectures are designed and built to scale and be resilient. Systems are now usually built to handle failover and auto-scale up and down to handle varying demand and workloads with very different properties and needs.

What Is a Telemetry Pipeline?

In a simple deployment, an application will emit spans, metrics, and logs which will be sent to api.honeycomb.io and show up in charts. This works for small projects and organizations that do not control outbound access from their servers. If your organization has more components, network rules, or requires tail-based sampling, you’ll need to create a telemetry pipeline.