Operations | Monitoring | ITSM | DevOps | Cloud

Grafana Vs Graphite

The amount of data being generated today is unprecedented. In fact, more data has been created in the last 2 years, than in the entire history of the human race. With such volume, it’s crucial for companies to be able to harness their data in order to further their business goals. A big part of this is analyzing data and seeing trends, and this is where solutions such as Graphite and Grafana become critical.

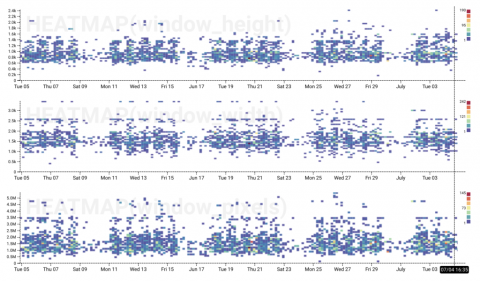

Level Up With Derived Columns: Understanding Screen Size (With Basic Arithmetic)

When we released derived columns last year, we already knew they were a powerful way to manipulate and explore data in Honeycomb, but we didn’t realize just how many different ways folks could use them. We use them all the time to improve our perspective when looking at data as we use Honeycomb internally, so we decided to share. So, in this series, Honeycombers share their favorite derived column use cases and explain how to achieve them.

You Can Improve Your Customer Satisfaction Charlie Brown!

What’s surprising to see today is how business operations struggle to get an integrated view of all business metrics. With greater volumes of data being collected, data analysts just can’t keep up with the pace. This state of affairs alone doesn’t hit as hard as the fact that many in data analytics have just come to accept this situation as a norm and simply bear with this daily struggle.

GrafanaCon Recap: The State of TSDB

At GrafanaCon EU, we gathered representatives of the Graphite, Prometheus, InfluxDB, and Timescale projects in the hopes of starting a spirited conversation about the current state of Time Series Databases. They didn’t disappoint! Here are a few highlights from the TSDB panel featuring Erik Nordstrom from Timescale, Dan Cech from Graphite, Paul Dix from InfluxDB, and Tom Wilkie from Prometheus, and moderated by Grafana Labs co-founder and CEO Raj Dutt.

Instrument Your Python App Automatically With The Honeycomb Beeline for Python

We’ve been on a roll this year with Beelines, our integrations for quick, easy, and automagic instrumentation of your apps. You may have already seen our Node.js, Ruby, and Go beelines – today, we’re excited to announce the release of the Honeycomb Beeline for Python!

timeShift(GrafanaBuzz, 1w) Issue 51

This week we’re proud to announce Grafana v5.2.1 stable is now available! Learn more and download the new stable release below, install the latest plugin updates, and check out this week’s collection of articles from around the web. Enjoy!

The Importance of Historical Log Data

Centralized log management lets you decide who can access log data without actually having access to the servers. You can also correlate data from different sources, such as the operating system, your applications, and the firewall. Another benefit is that user do not need to log in to hundreds of devices to find out what is happening. You can also use data normalization and enhancement rules to create value for people who might not be familiar with a specific log type.

Evolution of Telemetry at Bloomberg

With 5,000 engineers, 325,000 customers running its software, 2 data centers fully owned and operated, 200 node sites around the world, and a diverse architecture developed over almost four decades, Bloomberg has that many reasons to be committed to monitoring.

Fluentd vs. Fluent Bit: Side by Side Comparison

We all like a pretty dashboard. For us data nerds, there’s something extremely enticing about the colors and graphs depicting our environment in real-time. But while Kibana and Grafana bask in glory, there is a lot of heavy lifting being done behind the scenes to actually collect the data.