Operations | Monitoring | ITSM | DevOps | Cloud

timeShift(GrafanaBuzz, 1w) Issue 52

It’s good to be back from our short holiday break. This week we have articles on the world’s fastest internet (with a shout out to Prometheus and Grafana), visualizing real-time and historic weather data, making teams more autonomous, and Grafana + Prometheus + Postgres + TimescaleDB.

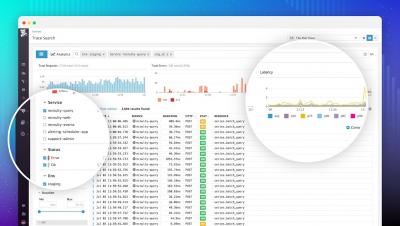

Trace Search & Analytics with infinite cardinality

Watchdog: Detect performance anomalies automatically

Guest Blog Post: Ballerina Makeover with Grafana

In this guest blog post from the folks at Ballerina, Anjana shows you how you can easily visualize metrics from a Ballerina service with Grafana, walking you step by step through the installation and configuration of the components. They’ve also extended an offer for a free ticket to their upcoming Ballerinacon to the Grafana community.

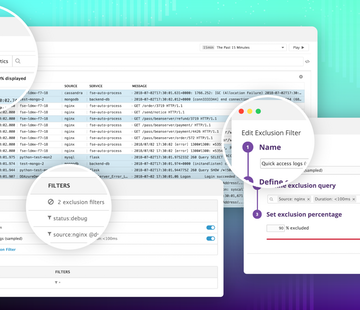

Introducing Logging without Limits

Traditional logging solutions require teams to provision and pay for a daily volume of logs, which quickly becomes cost-prohibitive without some form of server-side or agent-level filtering. But filtering your logs before sending them inevitably leads to gaps in coverage, and often filters out valuable data.

Metrics At Scale: Finding Data Insights From Relationships Between Different Metrics (Part 3)

In our previous article (How to Scale and Manage Millions of Metrics), we looked at correlations in terms of name similarity, but there are other types of similarities that occur between metrics.

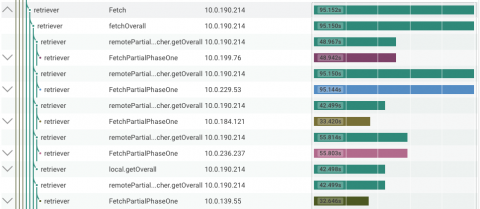

There And Back Again: A Honeycomb Tracing Story

In our previous post about Honeycomb Tracing, we used tracing to better understand Honeycomb’s own query path. When doing this kind of investigation, you typically have to go back and forth, zooming out and back in again, between your analytics tool and your tracing tool, often losing context in the process.

Elasticsearch SQL Support

Elasticsearch 6.3 included some major new features, including rollups and Java 10 support, but one of the most intriguing additions in this version is SQL support.

Large-Scale Log Management Deployment with Graylog: A User Perspective

Juraj Kosik, an Infrastructure Security Technical Lead at Deutsche Telekom Pan-Net, has written a detailed case study of how his organization implemented Graylog to centralize log data from multiple data centers exceeding 1 TB/day. His case study provides thorough insights into real-world issues you might run into when implementing and operating a log management platform in a large-scale cloud environment.