IT security under attack blog series: Instant domain persistence by registering a rogue domain controller

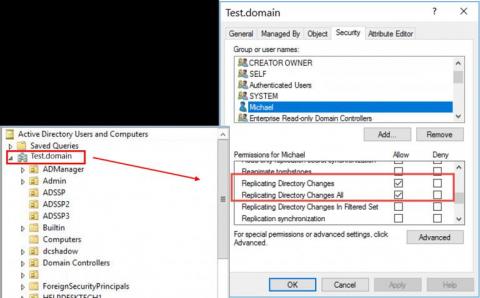

In this blog in the IT security under attack series, we will learn about an advanced Active Directory (AD) domain controller (DC) attack to obtain persistence in AD environments. Dubbed DCShadow, this is a late-stage kill chain attack that allows a threat actor with admin (domain or enterprise admin) credentials to leverage the replication mechanism in AD to register a rogue domain controller in order to inject backdoor changes to an AD domain.