Operations | Monitoring | ITSM | DevOps | Cloud

The latest News and Information on Log Management, Log Analytics and related technologies.

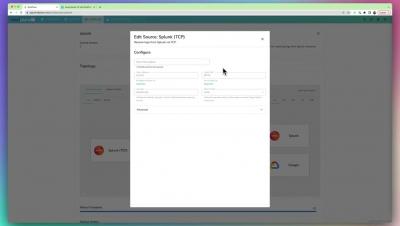

How to use Splunk Universal Forwarders With BindPlane OP

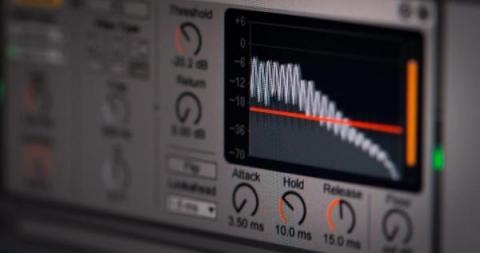

What Is Adaptive Thresholding?

Adaptive thresholding is a term used in computer science and — more specifically — across IT Service Intelligence (ITSI), for analyzing historical data to determine key performance indicators (KPIs) in your IT environment. Among other things, it’s used to govern KPI outliers in an effort to foster more meaningful and trusted performance monitoring alerts.

Your First 100 Days With Cribl: Why Having an Onboarding Process Matters

The process of adding new data to operations and security analytics tools is familiar to admins. New data onboarding can be a tiresome process that takes up too much time and delays getting value from the new data. The process typically begins with the admin engaging the data source owner, getting the wrong data sample, and then having to try again.

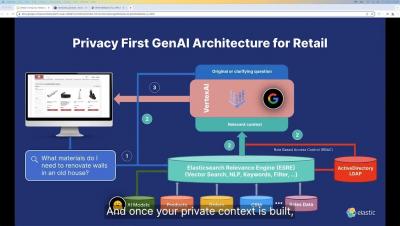

Turning Your Retail eCommerce into a Platform of Engagement and Loyalty

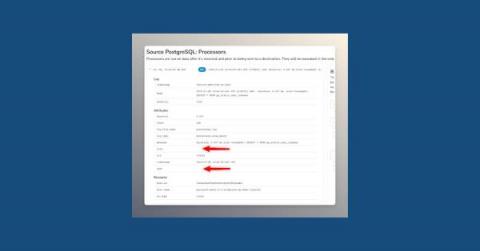

How to Remove Fields with Empty Values From Your Logs

Continuous Observability: Shedding Light on CI/CD Pipelines

DevOps is not just about operating software in production, but also releasing that software to production. Well-functioning continuous integration/continuous delivery (CI/CD) pipelines are critical for the business, and this calls for quality observability to ensure that Lead Time for Changes is kept short and that broken and flaky pipelines are quickly identified and remediated.

Democratizing Data Through Secure Self-Service Concierge Access of Cribl Stream

Ah, the age-old question of how to manage screen time for kids – it’s like trying to navigate a minefield of Peppa Pig, Paw Patrol, and PJ Masks! I mean, who knew Octonauts and Bubble Guppies would become household names? As a dad of two young kids, managing screen time is a balancing act, especially keeping our 5-year-old happy with access to her shows.

Cribl Stream Projects

Getting Started with GROK Patterns

If you’re new to logging, you might be tempted to collect all the data you possibly can. More information means more insights; at least, those NBC “the more you know” public services announcements told you it would help. Unfortunately, you can create new problems if you do too much logging. To streamline your log collection, you can apply some filtering of messages directly from the log source. However, to parse the data, you may need to use a Grok pattern.