Operations | Monitoring | ITSM | DevOps | Cloud

The latest News and Information on DevOps, CI/CD, Automation and related technologies.

What I learned from leading my first incident

A few weeks ago we had a major incident. We were releasing our Practical Guide to Incident Management, and after posting about it online an incident.io employee noticed that the page wasn’t loading. Just to set the scene, I’ve been at incident.io for 3 months and don’t have any experience of incidents in my previous role. When the team got paged I expected this to be one of those “follow along and learn how the wizards work their magic” exercises.

A CFO's Guide To Evaluating Cloud Spend

Integrate with Zabbix | Moogsoft Product Videos & How-Tos

Change Failure Rate explained

This post is the third in a series of deeper dive articles discussing DORA metrics. In previous articles, we looked at: The third metric we’ll examine, Change Failure Rate, is a lagging indicator that helps teams and organizations understand the quality of software that has been shipped, providing guidance on what the team can do to improve in the future.

Insurance Provider Reduces Software Licensing Costs, Saving Millions

Speedscale Traffic Replay is now v1.0

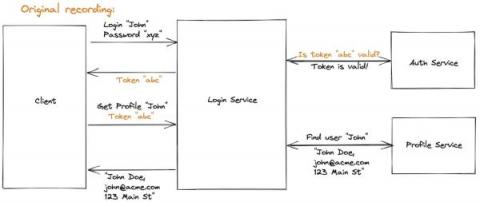

Nate Lee here, and I’m one of the founders of Speedscale. The founding team’s worked at several observability and testing companies like New Relic, Observe Inc, and iTKO over the last decade. Speedscale traffic replay was borne out of a frustration from reacting to problems (even if they were minor) that could have been prevented with better testing.

Civo Update - July 2022

In June, we hosted our online meetup with ContainIQ surrounding k8s monitoring and observability. You can catch up on the discussion between Matthew Lenhard (Co-founder & CTO of ContainIQ) and Kai Hoffman (Developer Advocate at Civo) here if you missed it. Meanwhile, Kamesh Sampath from our Developer Advocate demo program explains how Civo’s speed and developer experience is great to work with in our latest Civo Shorts.